the toolkit

The Extended View Toolkit is completely

built with the open-source

real-time graphical programming environment Puredata and GEM. It is

made completely with the basic functionality of Pd/GEM and does not

rely on any externals. We recommend Martin Peach´s mrpeach externals

for

OSC-communication. Some functions in the toolkit are using openGL

shader, therefore a recent graphic-card (openGL >= v2.0) is

needed

to make use of the toolkits whole potential *).

The whole toolkit is structured in a modular way to make it easily adoptable for users using different setups of cameras or projection environments, rather then having a complex patch that is not only hard to understand in its functionality and inner structure.

Every abstraction comes with a helpfile, accessible via right-click-->help, that shows properties and how to use it.

In general, the modules of the toolkit can be divided into groups. The following should give an overview(you will also find a pd-file called 01_ev_module-list.pd in the zip, that gives you a somewhat interactive overview over the modules):

The whole toolkit is structured in a modular way to make it easily adoptable for users using different setups of cameras or projection environments, rather then having a complex patch that is not only hard to understand in its functionality and inner structure.

Every abstraction comes with a helpfile, accessible via right-click-->help, that shows properties and how to use it.

In general, the modules of the toolkit can be divided into groups. The following should give an overview(you will also find a pd-file called 01_ev_module-list.pd in the zip, that gives you a somewhat interactive overview over the modules):

*) some functions are working with earlier openGL versions as well

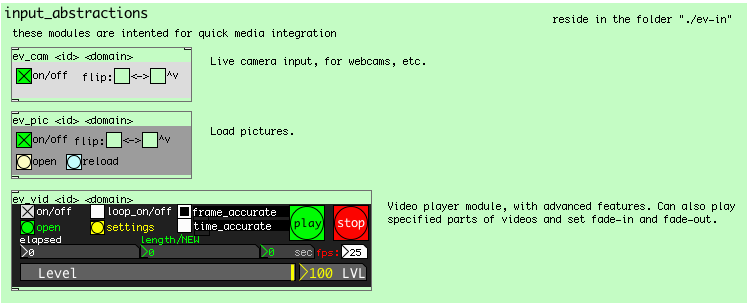

media input

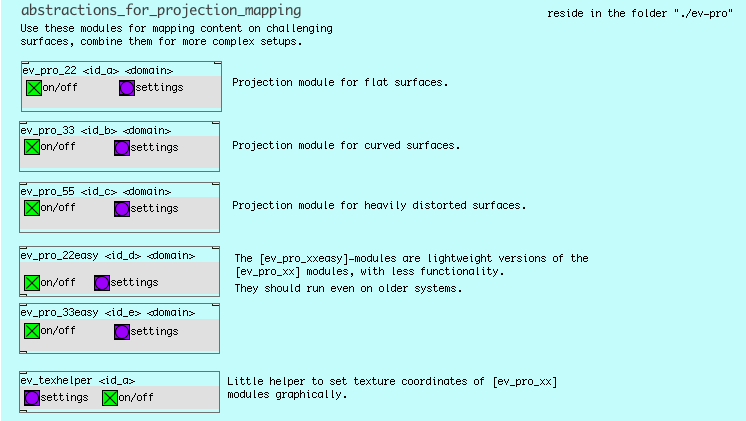

projection

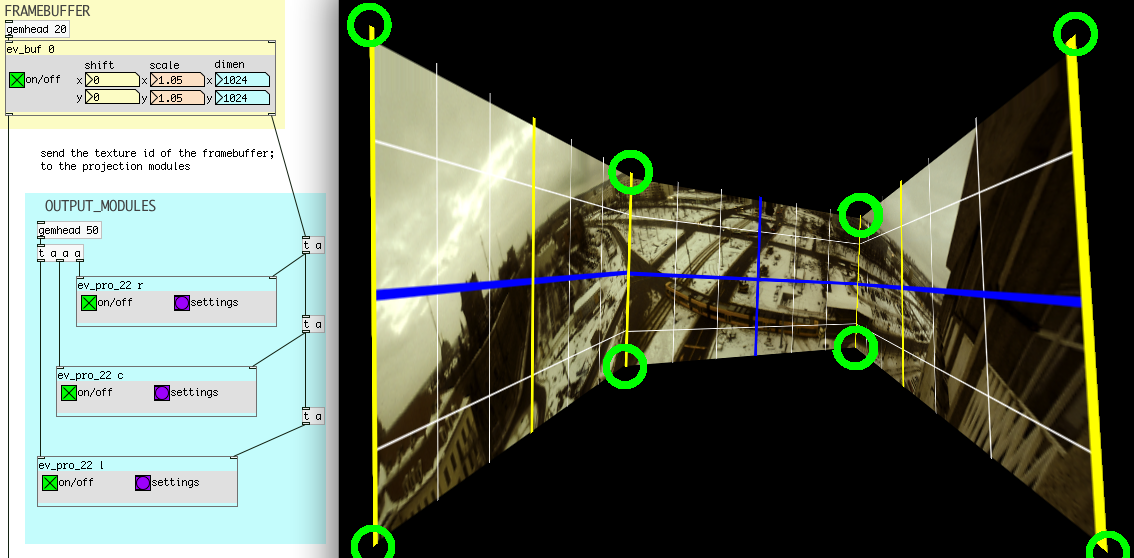

The output abstractions serve the purpose to enable the creation of immersive projection environments. Since it is quite usual, not to find ideal geometric shaped rooms to create immersive projection setups, it is a necessity to be able to adjust the projected content freely, in order to adopt the projection to the projection to the space. As a side-effect, those abstractions are not only good to create suitable projection environments for panoramic video, but can also be used to map content on different geometric forms like cubes etc.

These projection modules can use a specified part of any video/image source or framebuffer as a texture. By individually settable texture positions, it is possible to combine multiple projection modules, which each cut their individual part out of the same image source. With the ability to blend smoothly between different modules, it is easily possible to create a continuous immersive projection with multiple projectors on bumpy surfaces.

To enable computers with older graphics to make use of the toolkit, there are 2 additional projection abstractions, that do not rely on openGL shaders, but offer almost the same functionality. They are called [ev_easymap22] for the 4point-variant and [ev_easymap33] for the 9point variant.

panoramic image/video content

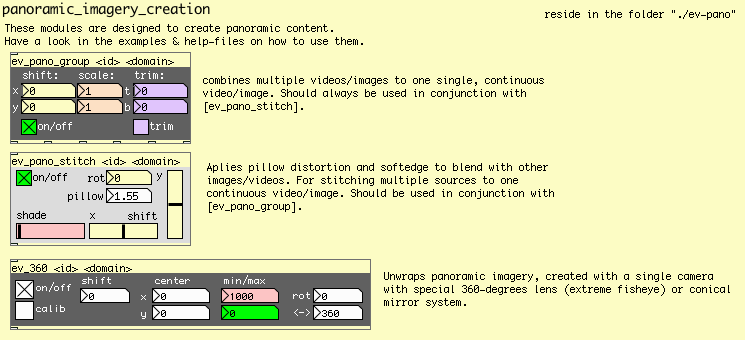

The abstractions in the ev_pano folder are designed to give you different possibilities to create panoramic content. Basically we implemented one solution, that allows you to stitch imagery, be it multiple still images or multiple video-files(recorded in sync), together in realtime. Just make sure, that your source-material is roughly aligned horicontally and overlap a little bit- with the toolkit you are able to adjust the alignment very fine and also take care of blending (soft edging) in the area of the overlap, as well as taking care of the lense/pillow distortion. Have a look in example 03a_ev_example_panoramic.pd that comes with the toolkit. Also have a look in the camera-section of this webpage to get an idea, how you could realize a multicam-panorama-video-camera.

The second way of creating panoramic content we cover is based around a system that just uses one camera. by using spherical lenses or mirrors it is possible to capture a full 360degree visual field. Such lenses are available even for mobile devices. Again, the toolkit offers you functionality to process these sources in realtime and unwarp the circular source image into a plane, rectangular image. Open example 03b to see how it works.

>>>

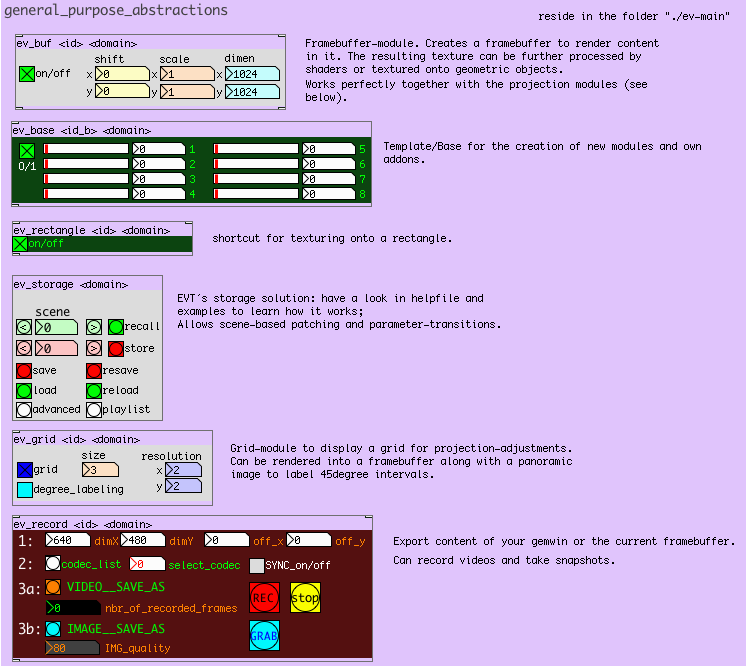

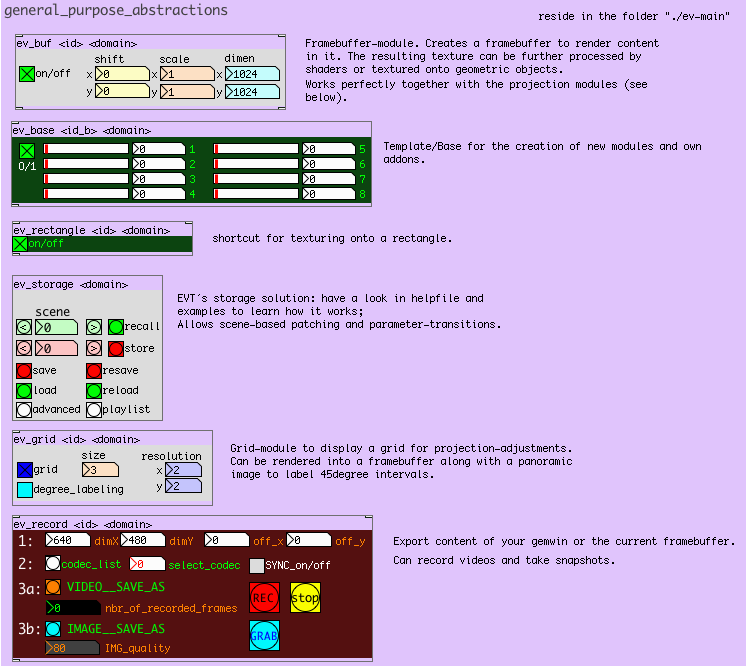

general purpose abstractions

In order to keep things

organized and to provide neccesary and helpful additional tools, there

are more abstractions built and used for the toolkit. Most important,

there is an abstraction for creating a framebuffer ([ev_buf]), an

abstraction ([ev_rec]), which allows to record the content of a

framebuffer or the gemwin, and of course the abstractions for the

storage/preset system.